The smaller number indicates better performance. All numbers are for execution time in seconds. The results on Intel architecture are shown in the figure below. The Second State WebAssembly VM (SSVM) 0.6.4 with AOT (Ahead-of-Time compiler) optimization.On Graviton2, we used AWS’s recommended LLVM optimization settings. On Intel architecture, we used the -O3 flag for Clang (see this section). The native executables are compiled by the LLVM 10.0.0 and Clang toolchain.

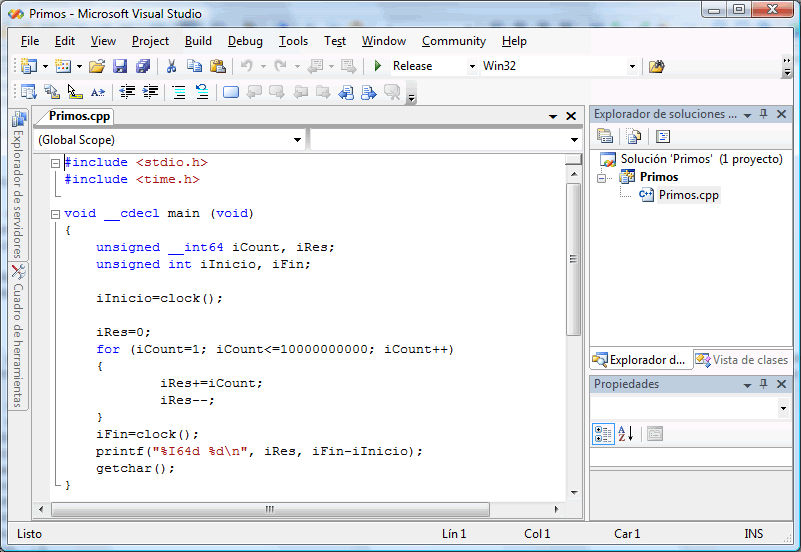

Amazon Linux 2 running on EC2 instances.Our software stack for the test cases is as follows. This design is called the WebAssembly Systems Interface (WASI). In the case of SSVM, the application must explicitly declare the resources, such as file system folders, it requires access to at startup time. The SSVM provides runtime safety, capability-based security, portability, and integration with Node.js.Ĭapability-based security requires the application to possess and present authorization tokens to access protected resources. The programs are written in Rust and compiled to WebAssembly bytecode. Test case #3: We run the benchmark tests in the Second State WebAssembly VM (SSVM). But it serves as a comparison point for the performance we could achieve under Docker. This scenario is somewhat unrealistic since few people could compile their apps to single binary executables, and ignore the ecosystems of tools and libraries provided by runtimes like Node.js. Test case #2: We also run the benchmark tests C/C++ native applications inside Docker with Ubuntu Server 20.04 LTS. Test case #1: To simulate the performance of a web application, we run the benchmark tests as a Node.js JavaScript application running inside Docker. We idled the instance long enough to accumulate sufficient CPU credits to sustain 100% CPU bursts throughout performance tests. To evaluate software stack performance, we ran the following test cases on an AWS t3.small instance, which features a physical CPU core consisting of 2 vCPUs. One of the most popular container runtimes is Docker, which is already optimized for performance. To preserve software safety, security, and cross-platform portability, we run the benchmark tests in containers and VMs. Next, let’s look at the exact test setup and some performance numbers! The source code and scripts of all test cases are available on GitHub. The binary-trees, repeated 21 times, allocates and deallocates large numbers of binary trees.The mandelbrot, repeated 15000 times, is to generate Mandelbrot set portable bitmap file.The fannkuch-redux, repeated 12 times, measures indexed access to an integer sequence.The nbody, repeated 50 million times, is an n-body simulation.They evaluate runtime performance after starting up. The following four benchmarks are from the Computer Languages Benchmarks Game, which provides crowd-sourced benchmark programs for over 25 programming languages.It evaluates performance in making operating system calls. The cat-sync test opens a local file, writes 128KB of text into it, and exits.The nop test starts the application environment and exits.The following two benchmarks evaluate cold start performance.The benchmarks we chose are the following. This is a deliberately simple test case to illustrate the raw performance. From the user’s point of view, the web service performance is likely to be bound by how fast a single CPU can execute. Most web application frameworks are running “one thread per request” by default. To illustrate this point, they noted that re-writing machine learning algorithms from Python to C / native code could improve performance by 60,000 times!įor the purpose of this study, we focus on single threaded performance.

The authors suggest that software improvements could replace Moore’s Law and drive productivity growth in the years to come.

Is the technology revolution as we know it over? Yet, the paper is optimistic about our technology future. That threatens 40 years of productivity and economic growth powered technology innovation. Computer hardware, such as the CPU and GPU, had hit the quantum limit and can no longer be made much faster or smaller. discussed one of today’s most important challenges in computer engineering - the end of Moore’s Law. In a recent research paper published in Science, MIT professors Leiserson and Thompson et al. Our conclusion is that Arm is more cost effective in the cloud, especially with lightweight runtimes that are close to the underlying operating system. In this article, we will use AWS’s Arm (Graviton2) and x86_64 (Intel) EC2 instances to evaluate computational performance across different software runtimes, including Docker, Node.js, and WebAssembly. With increasing adoption of high-performance Arm-based CPUs beyond mobile devices, it is important for developers to understand Arm’s performance characteristics for common server-side software stacks.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed